Case Studies

How organizations use confora labs to test, monitor, and govern their AI.

Underwriting

Insurance · Robustness

From AI Decisions to Measurable Risk

How automated testing revealed hidden robustness risks in LLM-assisted underwriting

The Context

Insurers deploy LLM-based systems to accelerate underwriting, extracting information from medical documents, summarizing histories, supporting decisions. But speed introduces risk: how do you know the summary is correct, the decisions fair, and the system EU AI Act-compliant?

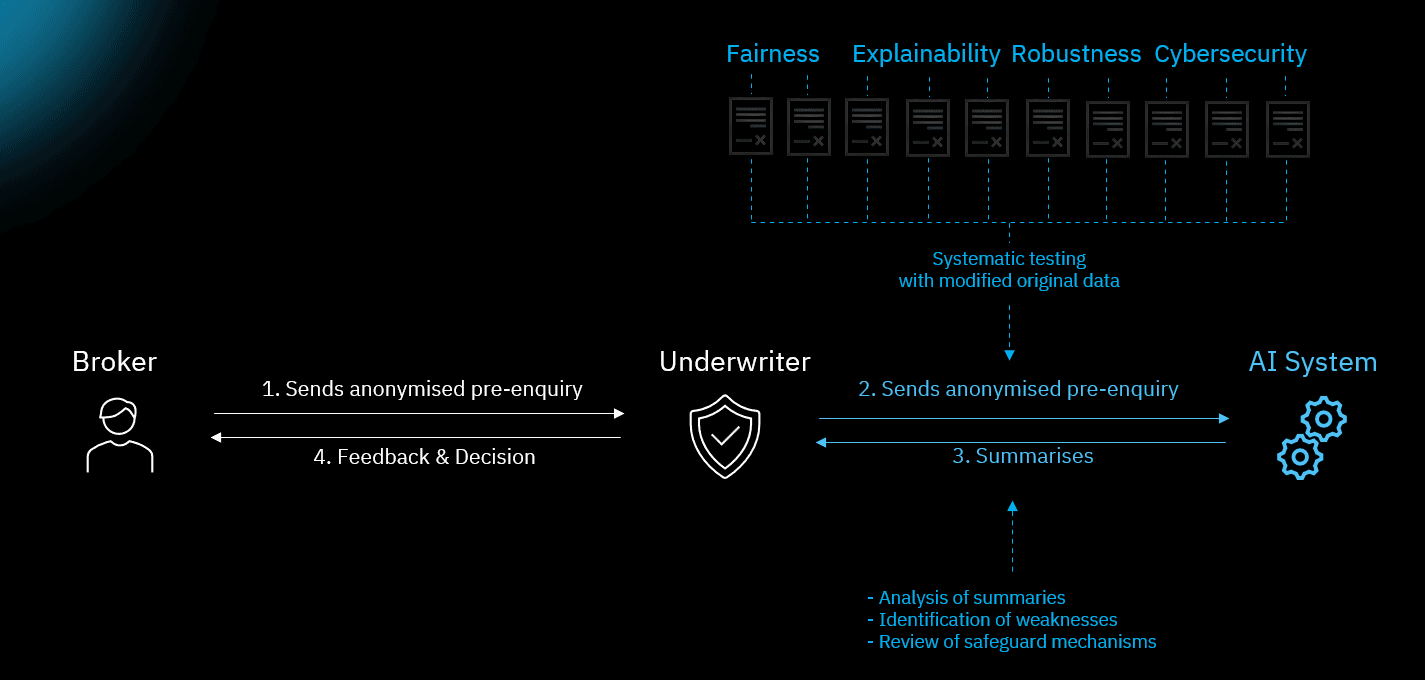

The Approach

confora labs ran a systematic assessment using Confora Insight's robustness testing module. Using 10 adversarial alteration types, we generated perturbed test cases from real document conditions: rotation, blur, noise, contrast changes, and more.

The Finding

Rotated documents led to a sharp increase in extraction and summarization errors. Medical conditions were incorrectly omitted or added in underwriting summaries. This failure mode was invisible in normal operation: the system produced plausible output, but the content was wrong.

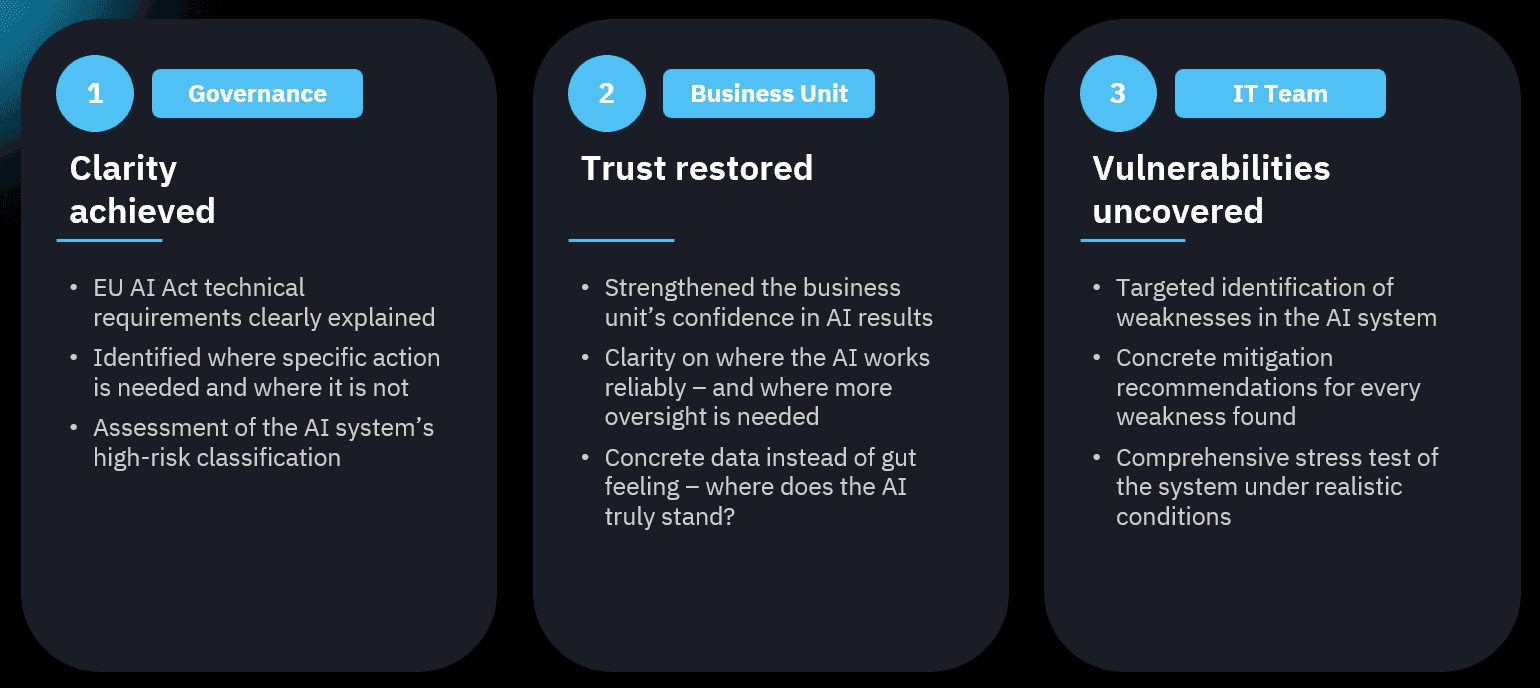

The Outcome

→ Document quality flagging before processing

→ Extraction pipeline retrained for rotation robustness

→ Robustness checks integrated into CI/CD pipeline

→ Findings documented in EU AI Act-aligned audit trail

→ Continuous monitoring set up to catch future regressions

Takeaway

AI systems that work in normal conditions can fail silently under realistic variations. Systematic testing turns invisible risks into documented, auditable findings.

Ready to test your AI?

Book a Demo

confora labs by spotixx GmbH